Merry Christmas, Code Wolfpack! Today we’re going to focus on a new algorithm that is a twist on an existing approach. The Double-Ratchet Protocol, created by Signal for the purpose of securing two-party conversations has seen wide-scale adoption by many messengers, and for good reason: it provides a great deal of advantages previous approaches did not, in the form of future and perfect forward secrecy. In this session, we will review exactly how the Double-Ratchet Protocol works, and how we will generalize this into the multi-party case. Fasten your seatbelts, we’re flying into some bumpy math.

First, a primer on some basic cryptography

It’s important to review some basics, as these will serve as the bedrock of the math we will use going forward. While the proofs and cryptanalysis involved are significantly more complex, understanding even some simple fundamentals will make the rest of this significantly easier.

Algebraic Concepts

If you’ve taken a class on number theory, you can go ahead and skip this part. For everyone else, we’re going to condense some ideas down into what matters for today. Consider the integers: 0, 1, 2, 3, ... You very likely know the basic operations over the integers: addition, multiplication, etc. like 1 + 1 = 2. So far so good. Consider that these operations do not need to limit themselves to strictly the integers. We recognize this to be true with the reals as well, i.e. 3.2810 + 4.182 = 7.463. The interesting thing is that these properties are not limited to just the number line. Extending to the 2D plane, addition is still really straightforward: (1,2) + (4,8) = (5,10). These properties feel intuitive, mostly because visual metaphors for counting are also intuitive to us, and is typically how addition and multiplication is taught. When you generalize the properties of addition, there are many other systems in which the rules still work! One of those systems happens to be over points on an elliptic curve (video link), the system of which is going to be the focus of this article.

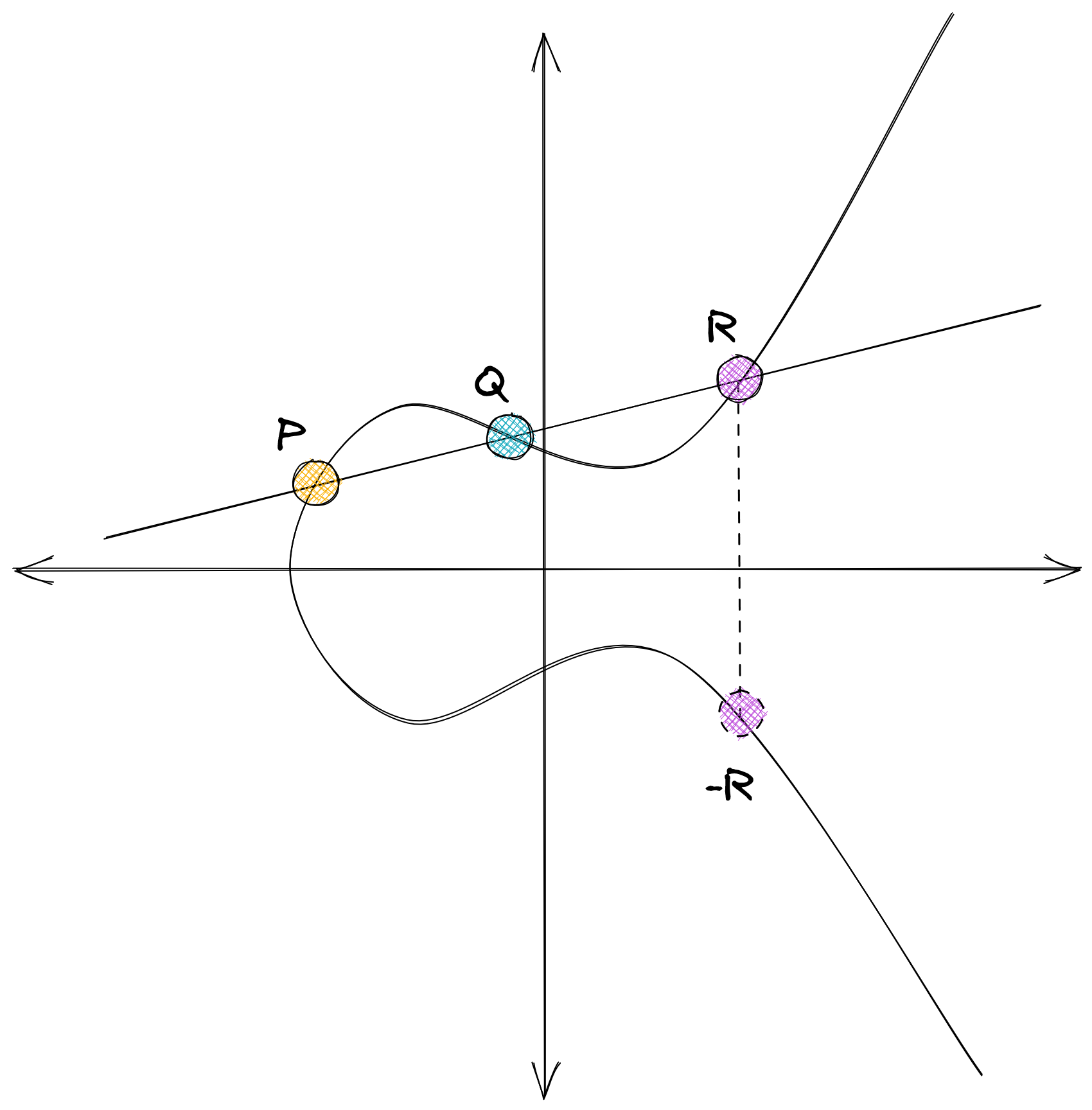

Despite the rules of addition being the same, the behavior is very different. Given two distinct points on a curve P and Q, their addition (P + Q = -R) looks like this:

What’s more, is when the points are the same (P = Q), the rules still work, using the tangent of the curve at that point:

Elliptic Curves for Cryptography

Generalizing this notion, you can use the same power properties of addition in other systems, to express scalar multiplication of points (P + P = 2P). This can extend into any number n, which is where things get really interesting. The formulas behind addition and scalar multiplication are reasonably efficient, but if you have some point G, with a scalar of n, producing nG = P, knowing G and P does not make finding n easy. Try it! If you find a fast route for all elliptic curves, congrats, you’ve solved a computational hardness problem that we base modern elliptic curve cryptography on top of. But also, congrats, you’ve now put us all in danger.

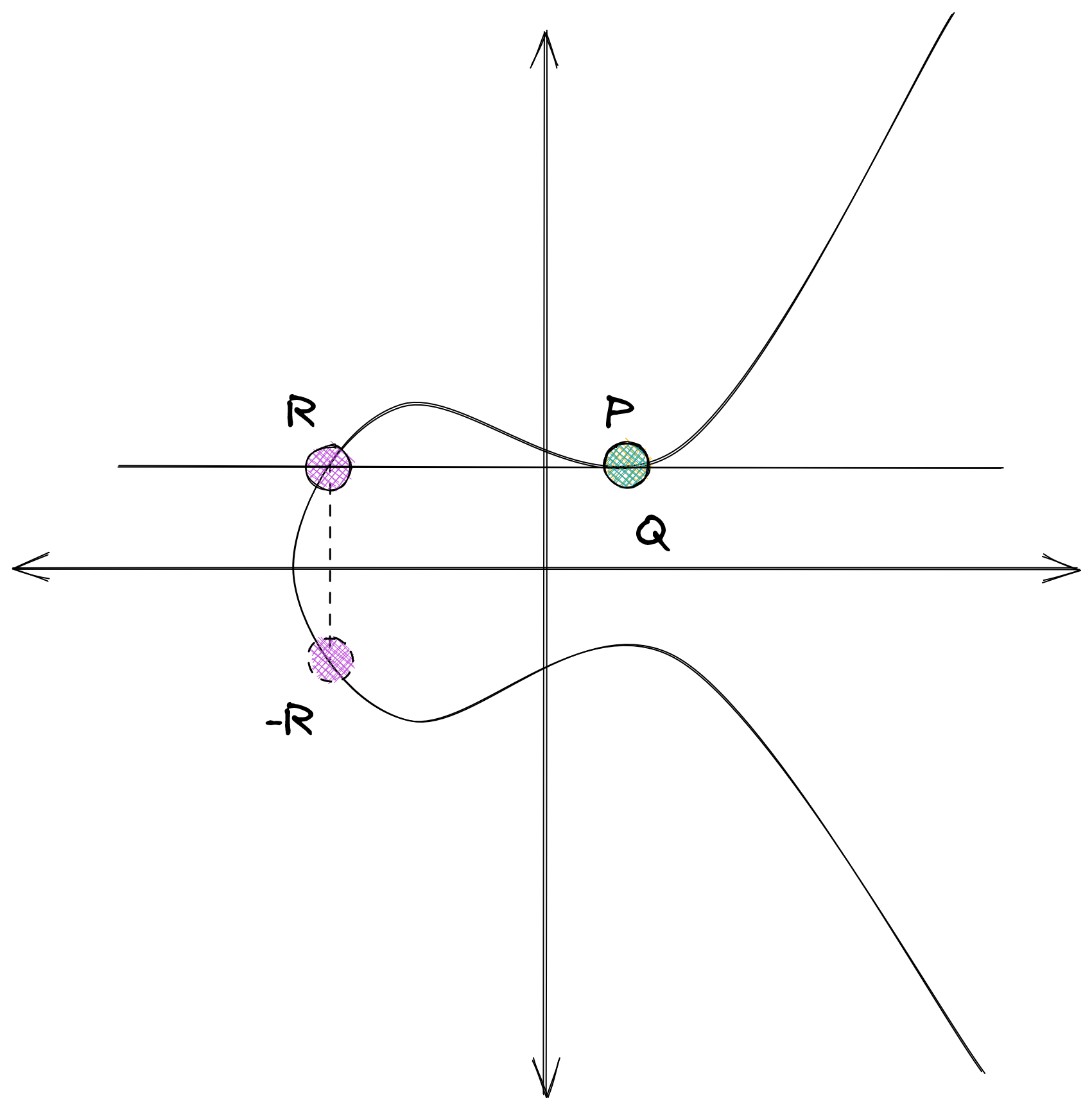

Back to the fun stuff. These numbers stretch into infinity thanks to the nature of the coordinate plane. But do they have to? As it turns out, there are certain additional restrictions that can add in our numbers game. You might have encountered the modulo (%) operator in writing code, which lets you return the remainder of an integer division. When you think about the number line of integers continuously looping around via modulo arithmetic, you’ll notice that the addition rules still apply successfully (14 + 16 = 30, 30 % 10 = 0, 14 % 10 = 4, 16 % 10 = 6, 4 + 6 = 10, 10 % 10 = 0). When you do this kind of wrapped arithmetic over the integers, you get what is called a finite ring. The range of that ring, i.e. the numbers possible in the set, is called the order. One interesting property is that multiplication still behaves the same when the order is prime. When the order is prime, we call this a finite field. The properties of finite fields just so happens to allow the same elliptic curve point arithmetic to succeed over integer points, looking like this:

What is especially handy about this is that on top of the math holding up, we now have a bounded size for representing the possible points.

Diffie-Hellman

One final observation I want to point out before we dive into the next section, is how exactly we use this in the context of cryptography. When you think about the difficulty in computing the scalar of a point n, given the point G and the result P of multiplying that scalar on the point (nG = P), there is a certain asymmetry that is valuable in a cryptographic context. If G is well defined and agreed upon, you can exchange a P value with others without fear that they may derive n. In this context, n becomes your private key, and P becomes the public key. This upholds when the security parameters are strong. When we discuss security parameters, obviously a smaller bound reduces the number of possible points for a curve, and also thus the security, but the definition of the curve too can have certain weaknesses. There are many criteria for safety in curves, and sometimes the applicability of that criteria only comes up in special use cases. That being said, safecurves.cr.yp.to is an immensely valuable resources for picking safe curves.

With a safe curve, you can build other mathematical abstractions around the notion of a private n and public P, such as digital signing algorithms, and secure key agreement. In fact, the key agreement process is so straightforward, that you might have already figured it out: since scalar operations are commutative, abG = baG, and since that applies to point addition too, you can consider the substitution: if two parties have key pairs (a, P = aG), (b, Q = bG), and exchanging the public keys is safe, all one needs to do to reach an agreed upon key is trade public keys, and use their private key as the scalar, as bP = b(aG) = a(bG) = aQ. Taking the x coordinate, you now have a key. And that is exactly how Elliptic Curve Diffie-Hellman works. So let’s build a ratchet out of this!

What is a Cryptographic Ratchet?

A ratchet in the literal sense is a device that can only move forward in one direction, unable to move backwards. In the cryptographic sense, very similarly the algorithm can only advance state forward (i.e. is not reversible), but unlike the physical device, does not loop back around to an initial state. Double-Ratchet leverages two different ratchets chained together to create a deterministic series of keys used to secure two party conversations. To start things off, let’s discuss the Diffie-Hellman ratchet.

Diffie-Hellman Ratchet

In the above description of Elliptic Curve Diffie-Hellman, we took advantage of the property that bP = b(aG) = a(bG) = aQ. When you consider the nature of two parties sending messages, one party is always the initiator. Assuming that each party has generated a collection of keypairs for one-time use (and assuming you can trust that the collection really is theirs, see more in our previous post about QR Authentication to see attack scenarios), you can envision the following scenario:

A common convention in two party scenarios in cryptography is to use the names Alice and Bob to represent two distinct honest actors.

- Alice and Bob have published a stream of public keys, the next available only after spending the one before, denoted

A_1, A_2, A_3, ..., A_nandB_1, B_2, B_3, ..., B_n. - Alice grabs Bob’s available public key,

B_1, and combines it with her private keya_1, producing a sending keySK_A. From Bob’s perspective, this is the receiving keyRK_B.SK_A = RK_B - Alice uses some symmetric cipher with the derived key for her message, and sends her public key

A_1, a reference telling Bob to use the private key forB_1, and the encrypted message. - Bob derives

RK_Bfrom the info, and decrypts the message. Bob takesA_1and combines it with his private keyb_2for the next key in his setB_2. This produces his sending keySK_B. From Alice’s perspective, this is the receiving keyRK_A. - Bob uses some symmetric cipher with the derived key for his message, and sends his public key

B_2, a reference telling Alice to use the private key forA_1, and the encrypted message. - Alice derives

RK_Afrom the info, and decrypts the message. Alice takesB_2and combines it with her private keya_2for the next key in her setA_2. This produces her next sending key. This continues for every phase of communication of sending and receiving.

This works okay for the singular message back and forth case, but how do we handle multiple messages from one side before the ratchet rotates? Reusing the same key comes at a cost to some security, because the duration without a response from the other side could happen for a while, meaning the recipient will need to hold onto their private key b_1 until they have confirmed a full rotation. Further, it also does nothing to preserve ordering of messages. So how do we resolve this problem? Enter the Key Derivation Function (KDF) Ratchet.

KDF Ratchet

The KDF ratchet employs the use of a hash-based message authentication code (HMAC). A common HMAC in this scenario is HMAC-SHA256. As a function, you can consider HMAC defined generally as HMAC(K, m) = H((K' xor opad) || H((K' xor ipad) || m). To break this down into what this means and why, let’s first label the pieces.

H(x)is a hash functionKis the secret keymis the messageopadis the outer padding, consisting of the byte0x5Crepeated for the byte length of the blockipadis the inner padding, consisting of the byte0x36repeated for the byte length of the blockK'is a derivation ofK, where when smaller than the block size is padded to the right with zeroes to the byte length of the block, or hashed withHto either less than or equal to the block size and padded to the right with zeroes if shorter.

This produces an output sized to the hash function. HMAC, in conjunction with an expansion function, creates a secure KDF with definable length. We can describe this function as HKDF(salt, K, m, L). the two additional elements here are salt, a non-secret random value or a byte string of zeroes to the length of the hash function, and L, the length of the KDF output in bytes. The process for the HKDF has two phases: an extract phase, which is just the invocation of the HMAC as HMAC(salt, K) (note the order), and using that value as a pseudo-random key PRK in the expand phase, which can be expressed in the following pseudocode:

int hl = <length_of_hash_function_in_bytes>

int n = (L / hl) + (L % hl > 0 ? 1 : 0)

string t = ""

for (byte i = 0x01; i <= n; i++) {

t = HMAC(PRK, t + m + i) // assume + is a concatenation operator

}

return t[0..n]We can now take this KDF and chain it with itself, using the output length in bytes to produce a block that can be split into constituent pieces as needed, at a minimum the same length as the input key, to be fed back into the KDF. This will get used in conjunction with the Diffie-Hellman Ratchet to produce: The Double-Ratchet.

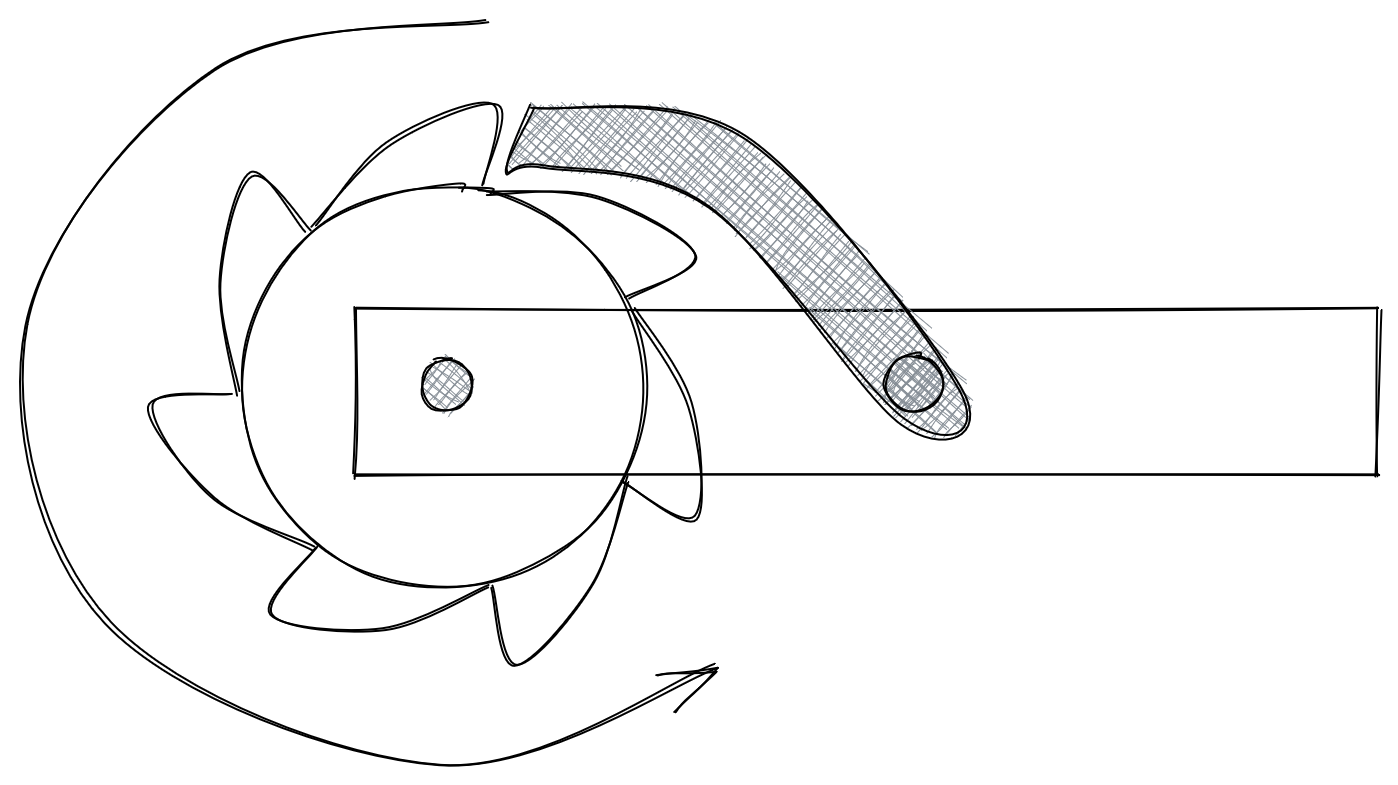

Double-Ratchet

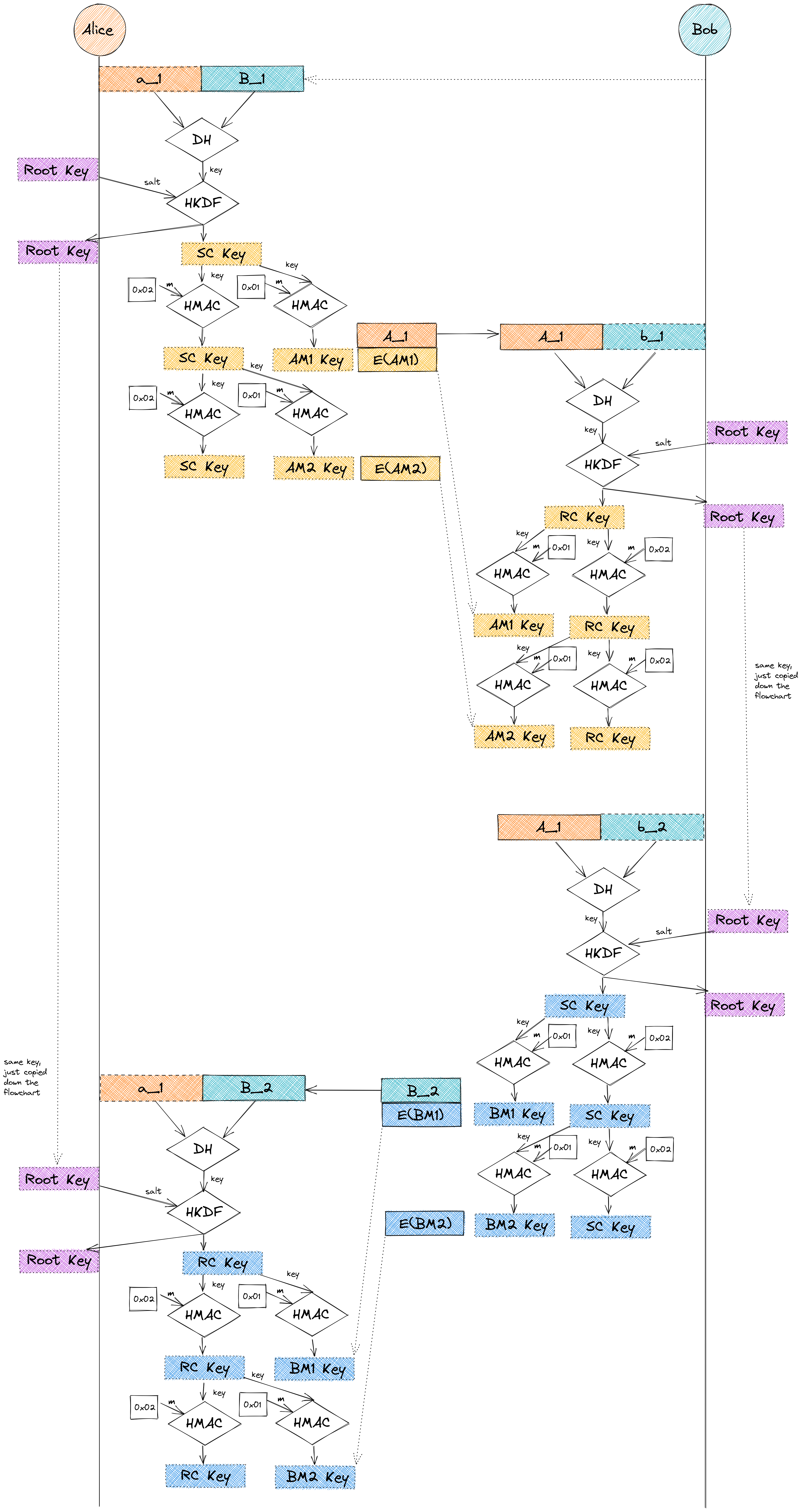

Each half of the rotation leads to new sending keys, the full rotation leads to receiving keys. An initial root key is established using a separate key agreement protocol (Signal uses X3DH) as the salt to the HKDF, subsequently the root key is the first 32 bytes derived from the HKDF. Both the sending and receiving keys from the DH ratchet are then fed into HKDF as the input keys, to produce a Sending Chain Key (SC Key) and Receiving Chain Key (RC Key). The chain keys are then further derived into message keys for Alice and Bob, using the HMAC with an input of 0x01 for the message, chain key for the key. The HMAC is used again with an input of 0x02 for the message, chain key again for the key to produce a new step of the chain key. Given this is hard to describe textually, I sketched it out with some distance between half rotations of the ratchet for readability:

Extending Double-Ratchet to the Multi-Party Setting: The Triple-Ratchet Protocol

Many approaches have existed to doing this, using the same basic cryptographic operations we’ve discussed so far. A naive approach considers the commutative property of elliptic curve scalar math used in ECDH for many parties, as abcG = acbG = cbaG, but given the scalars are private keys, we have a conundrum: Party A would have to calculate baG and caG, B would have to calculate abG and cbG, C would have to calculate acG and bcG, then send those values to each other for another scalar multiplication round to complete the key agreement. For parties of three, this isn’t a major dealbreaker (and there actually exists a special algorithm in the 3-party case that makes this only take one round instead of two), but in larger parties, n-1 rounds of message passing is unbearable, not to also mention this demands all parties be online at time of key agreement, which is untenable for relatively small chat rooms, let alone chat rooms in the size of 10,000 people. Matrix and others leverage a peer-to-peer process for sharing a key to join a group chat, using a ratchet called Megolm. The key problem (pardon the pun), noted by their own Limitations section, is that the properties that made Double-Ratchet so secure are not present, most notably the loss to future secrecy in the event of compromise - all subsequent messages will be decryptable without a new key agreement phase.

So how do we do it?

We’re Gonna Need A Bigger Ratchet

In the world of cryptography, many schemes have emerged to support elliptic curve cryptography in multiparty cases, typically with a dedicated threshold number of participants needed to be present to conduct the relevant cryptographic operation post key generation. While a variety of key generation processes have emerged, many come at great computational costs. This is extremely painful in a world where cellphones may be more powerful than the Nokia bricks of yesteryear, but certainly not ASIC crypto miners. Thankfully, some schemes have emerged that enable high performance operations for ECC, at the expense of bandwidth. One such approach happens to do this quite expediently. While the signing side of this research is far out of band for the scope of this article, the key generation process is particularly relevant, and hopefully not a bridge too far from the core concepts we’ve built up on so far.

The Third Ratchet

In the Double-Ratchet protocol, we describe Bob as a potential sender of messages, which makes sense because if there were no Bob, Alice would be talking to herself, and in which case, she doesn’t need cryptography, she needs a psych consult. But what if Alice had a generic “Bob” to talk to? That is, what if that “Bob” were actually the entire room? Then the actual Bob… hell, Carol, Dave, Elizabeth, too can all talk to the room. So long as a threshold number of the room members are present, a decryption key is possible, but we still get time-of validation that the sender is who they said they were with post-compromise repudiability.

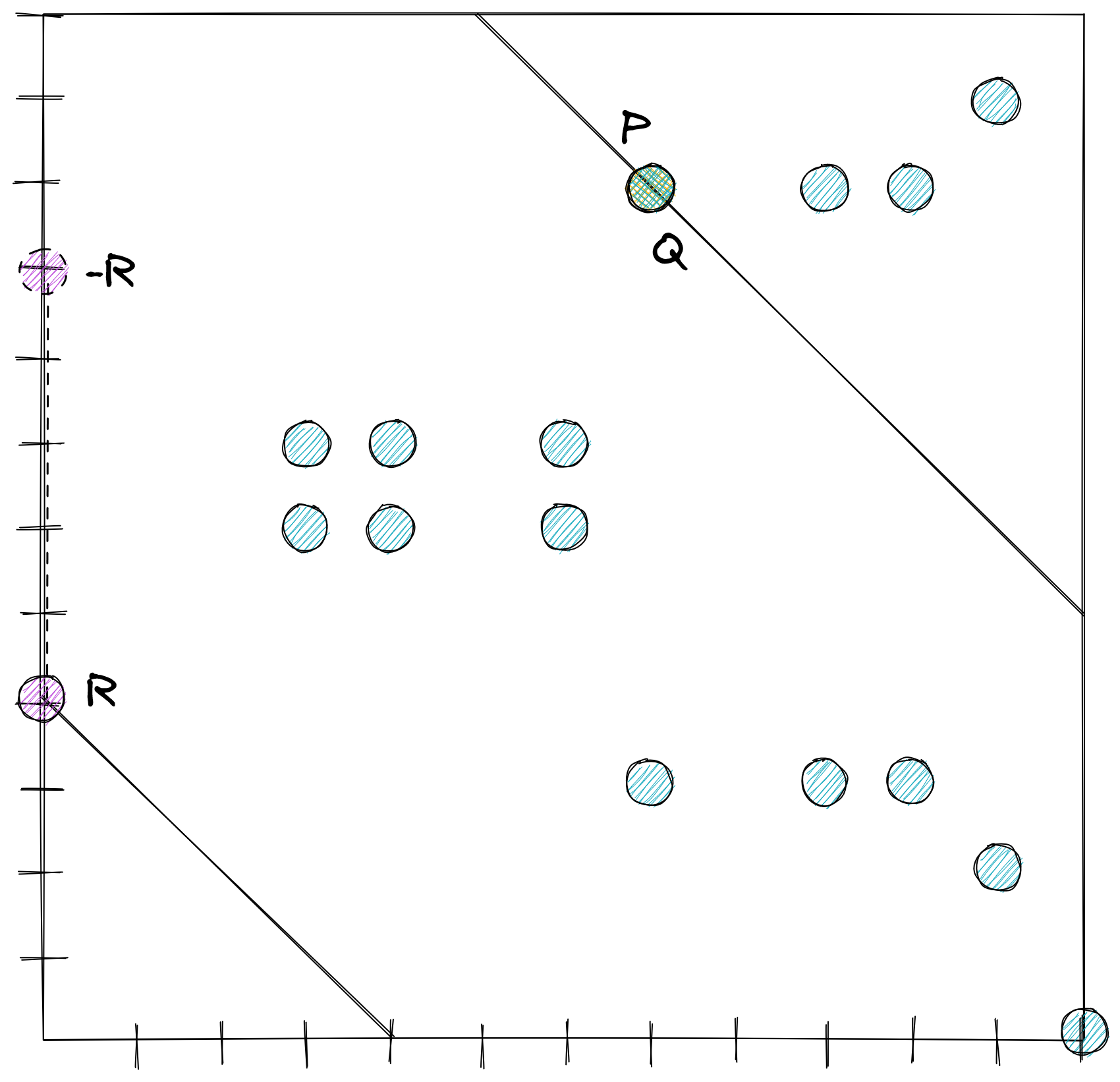

Taking the approach linked to above, the key generation KG(t, n, G) process works like this:

- Each party

igenerates a random polynomialp_i(x)of degreet - 1, wheretis the threshold number of users needed to be present at any time in the chat. - All parties pairwise send

p_i(j)to each other, wherejis the identifier of each party, and pairwise receivesp_j(i). - Each party computes its point

p(i)as the sum ofp_j(i), calculatesT_i = p(i)G, and sends a committed zero-knowledge proof-of-knowledge (ZKPoK). - Once all parties have received the commitments, each party sends their decommitments and

T_i. These two steps (3 and 4) secure against a malicious majority. If no malicious majority is necessary to consider, omit step 3 and 4 and sendT_i. - For all contiguous sets of threshold parties, perform Shamir reconstruction in the exponent, and confirm they are all identical. This is your public key for the room

P. The logical private key will be referred to asp

While additional steps will matter for signing, we will omit that for this article, and note one detail - G can be any valid point. Under typical distributed key generation, G is the common generator defined for the curve. But, if you recall the observation about ECDH and the commutative properties of scalars, we know that any sending party Alice will calculate aR, and to derive the relevant receiving keys, all parties need only re-run steps 3-5 of KG, but with Alice’s public key A instead of G. Provided a threshold number of participants are online, any group of t can complete the remaining steps, performing Shamir reconstruction in the exponent. No confirmation against other contiguous set outputs will be necessary this time, as we will be correct if and only if aP = pA.

To trigger a ratchet rotation, the room size must change, or a periodic reissuance interval must be encountered, whichever occurs first. To confirm room key succession, the aforementioned signing algorithm must be performed, the signature of which will become the new Root Key input.

Hey wait a minute… periodic reissuance? This is a distributed protocol, how will we agree on the time? That’s up to Part 3 of this series.

Code Wolfpack

Code Wolfpack